Input Modulation

As of 0.9.4 beta, audio input modulation can only be used to modulate the light output within Prism. There are plenty of options to modulate audio within DAWs, but we recognized the desire to modulate full audio tracks with a unified set of controls directly from Prism.

This has been on our internal wish list as well, and we’ve already been experimenting with with evolving this concept within Prism, with compelling results.

We plan to implement this within either an additional beta release or simply include it with the imminent commercial release.

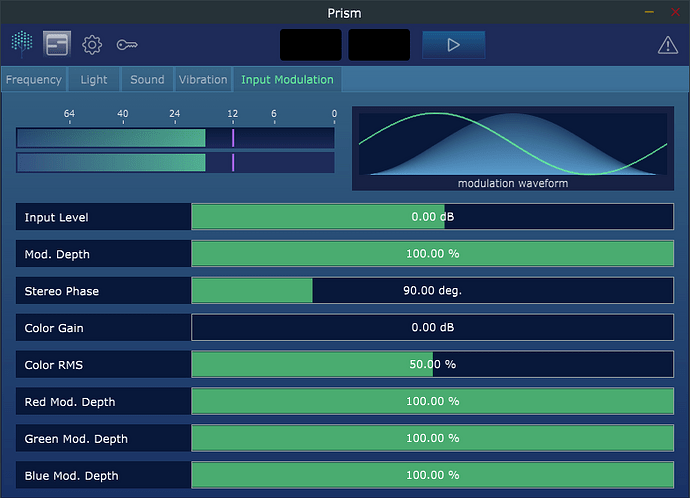

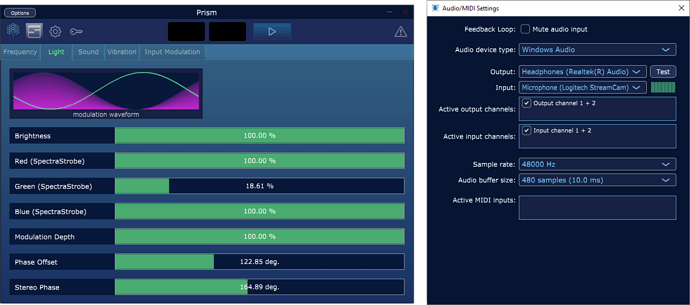

Input Modulation will migrate from the Light editor to its own section/tab within Prism, and will have an expanded set of options, including its own waveform modulator with waveform select, modulation depth, stereo phase, gain, and the ability to modulate each separate color with varying amounts, if desired.

As illustrated in the modulation waveform of the image above, the Stereo Phase parameter of all modulation waveforms in Prism will now visualize the phase difference between the left and right channels using a waveform line rendering (above we can see a 90 degree phase difference).

FX Version

In addition to a more robust input modulation section, we are planning on releasing an FX version of the plug-in. This would differ from the virtual instrument version of Prism in two ways:

- The FX version will acquire its input modulation source directly from the audio track it is placed on in the host DAW, eliminating the need for sidechain routing.

- The FX version will lack signal source routing to submix outputs and will only use the default main stereo output (a limitation of effect plug-ins vs instruments)

You will be able to use Prism solely as an audio rate LFO effect on any audio track in your DAW through a configuration in Prism Settings.

Standalone Version

We do already have a very simple standalone version of Prism. It’s great for getting up and running quickly with minimal headache, and live experimentation. However, it currently lacks sequencing capabilities (timeline and parameter automation, etc.).

If there is clear demand, we plan to release a professional Prism Studio version that includes a full featured standalone app with full sequencing functionality, render to disk, and asset packs for use in creating a variety of different experiences. Prism Studio would replace the DAW, eliminating the reliance on any 3rd party software requirements.

MIDI

We are re-imagining how Prism will work with MIDI input and we look forward to sharing our progress with everyone in future updates.

We’ve had some interest in being able to drive frequency relationships with incoming MIDI musical frequency, and we’ve already begun to explore this concept by controlling Prism as an entrainment instrument.

Feature Requests

Please reply to this post with any additional feature requests and let us know how you are using Prism.